I was trying to update my Azure Language service to enable Custom text classification / Custom Named Entity Recognition. That feature requires a storage account. While you are supposed to be able to create the storage account when you enable the feature it didn’t work for me 🙁 (I was getting an “Invalid user storage id or storage type is not supported” error).

Continue reading Azure: Invalid user storage id or storage type is not supportedUse ChatGPT to generate sample monitoring data

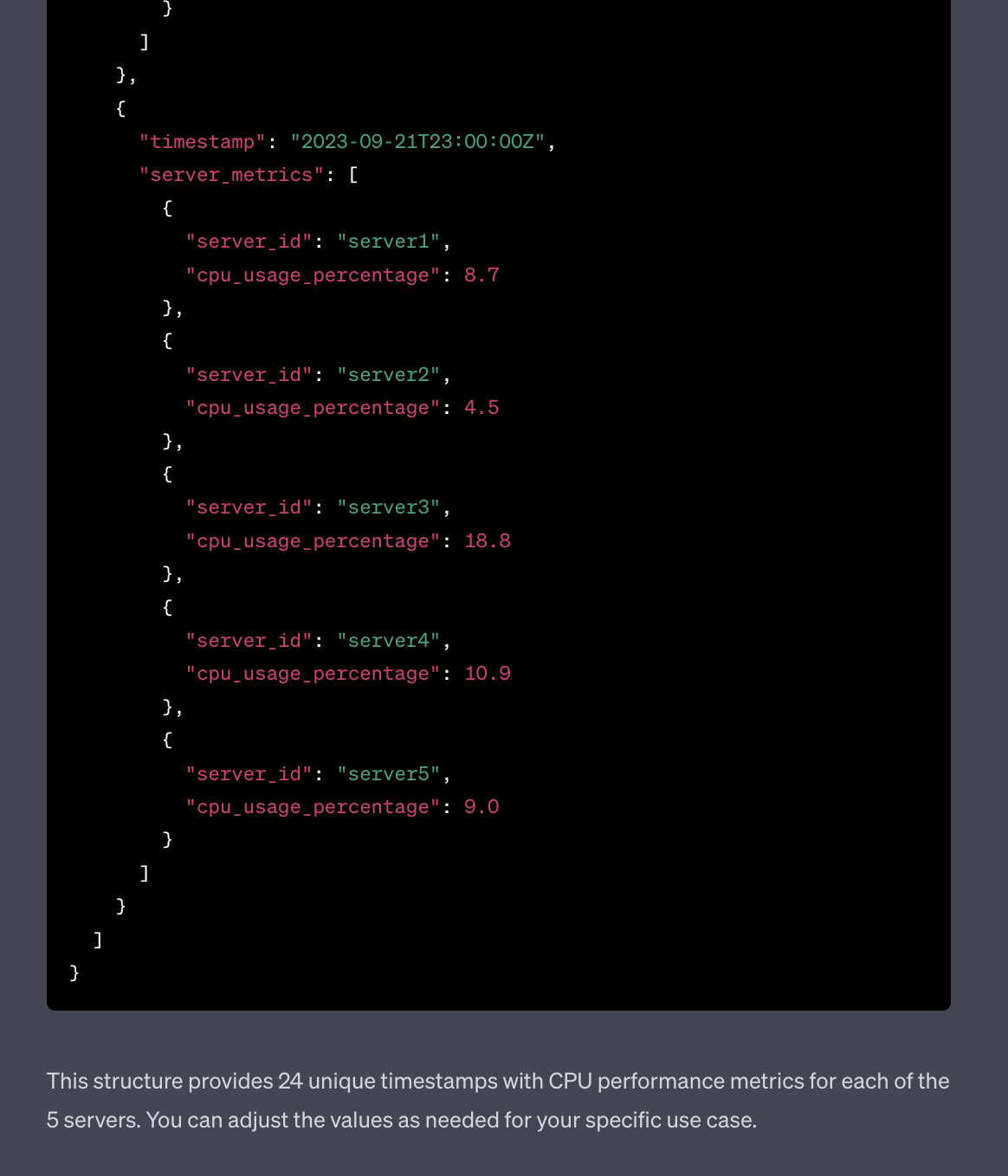

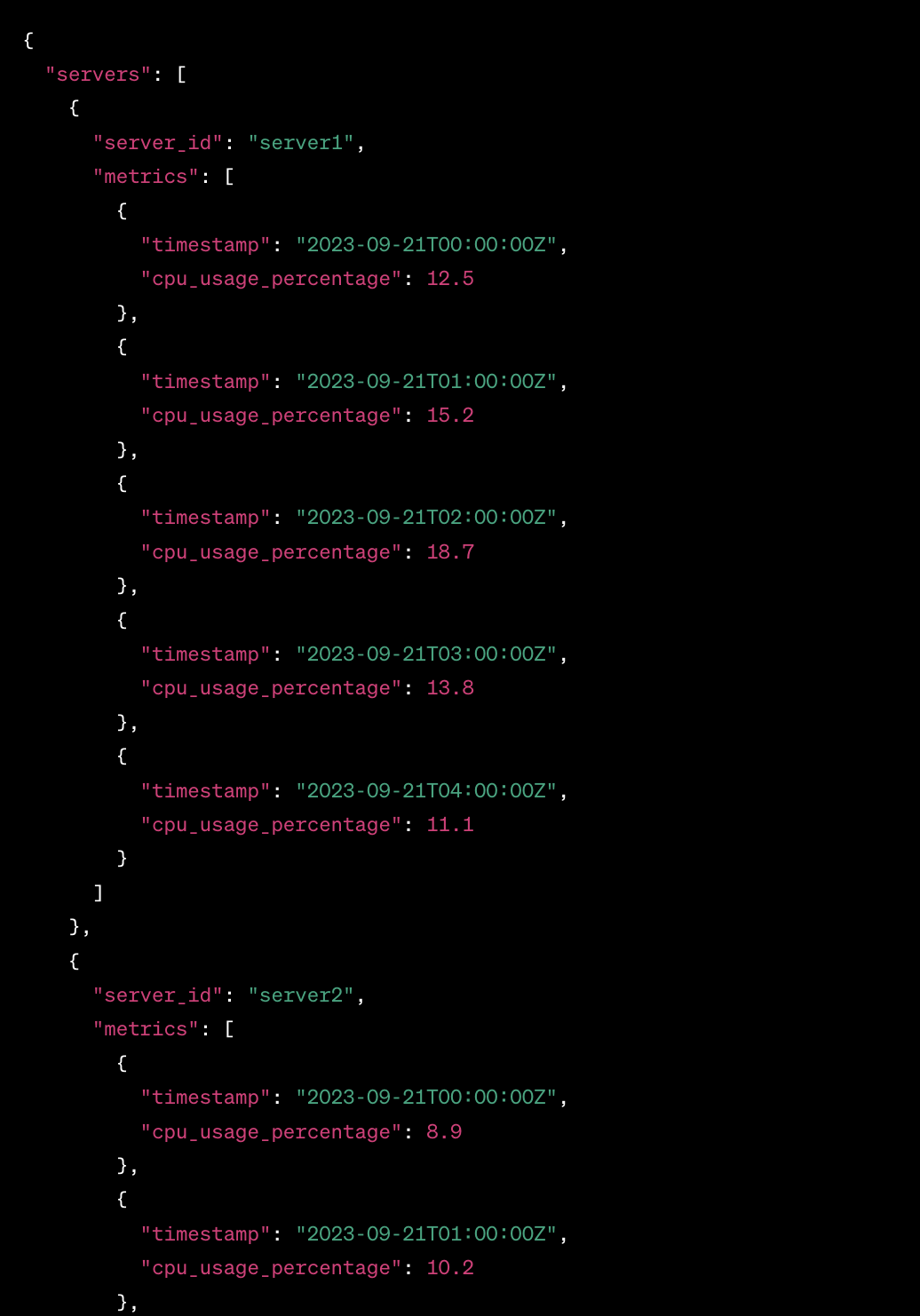

I wanted to get some sample data and was too lazy to use generators or to craft it by hand, so I decided to try and use ChatGPT to generate sample monitoring data.

Started with this prompt

act as an application and infracture monitoring platform synthetic data generator. All you responses need to be in a valid JSON format. Generate CPU performance metrics for 5 servers over last 24 hours

The result was actually OK

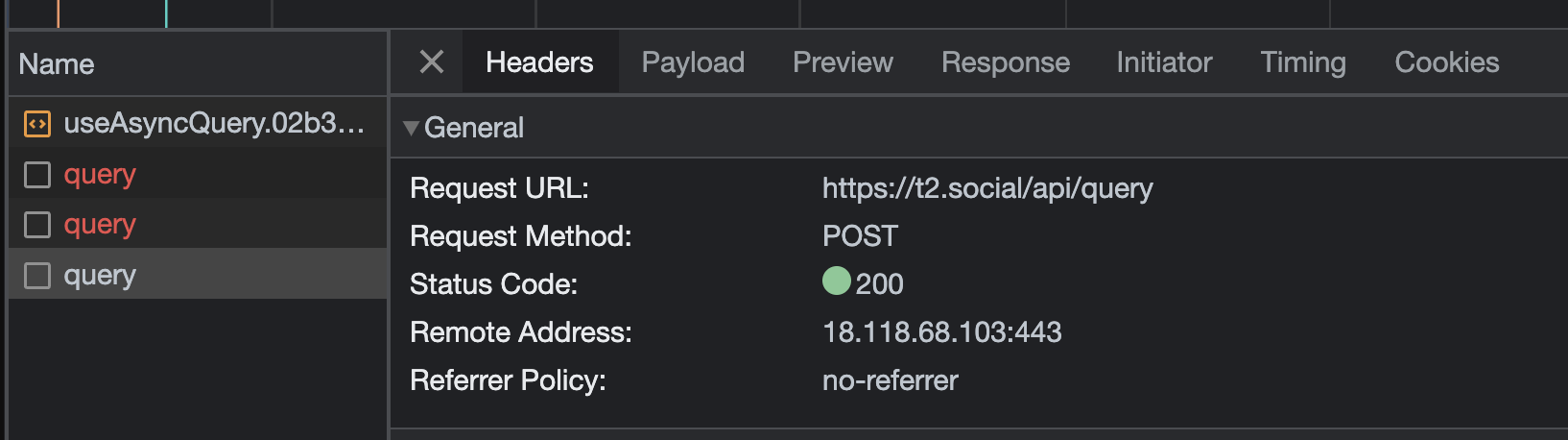

Discovering the T2.social API

So I’ve joined T2 (now Pebble) to try it out and it was pretty quiet there at the beginning. It was a bit hard to see whom to follow and such. So I decided to look a bit behind the curtain and see if T2 Social has an API.

By the way, if you need an invite reach out to me either via comments here or on Twitter @IlyaReshet.

Is there a T2 official API?

While there is no, official and documented API (at least at the time of writing which is the beginning of June 2023) that I could find I had an idea to look at the Network tab in the Chrome Developer Console

Continue reading Discovering the T2.social APIAdditional zoom levels for maps in Splunk

Splunk’s default Cluster Map’s maximum zoom level is 7, which will let you see major cities in a country.

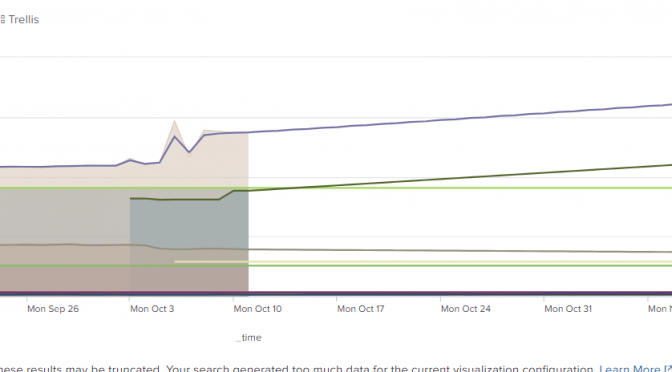

Predicting multiple metrics in Splunk

Splunk has a predict command that can be used to predict a future value of a metric based on historical values. This is not a Machine Learning or an Artificial Intelligence functionality, but a plain-old-statistical analysis.

So if we have a single metric, based on historical results we can produce a nice prediction for the future (of definable span), but predicting multiple metrics in Splunk might not be as straightforward.

Continue reading Predicting multiple metrics in SplunkSplunk Failed to apply rollup policy to index… Summary span… cannot be cron scheduled

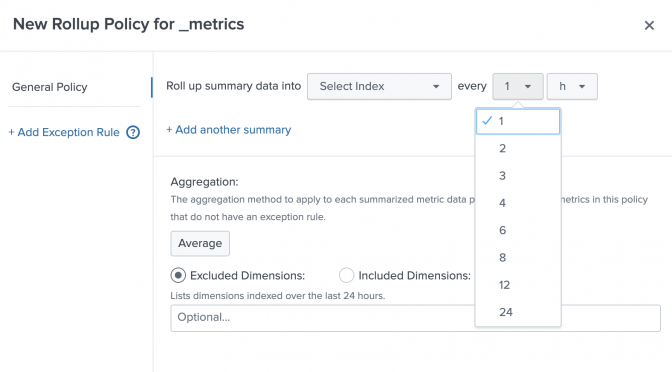

I started playing for Splunk Metrics rollups and but then tried to step out of the box and got a “Failed to apply rollup policy to index=’…’. Summary span=’7d’ cannot be cron scheduled” error.

Continue reading Splunk Failed to apply rollup policy to index… Summary span… cannot be cron scheduledHow to collect StatsD metrics from rippled server using Splunk

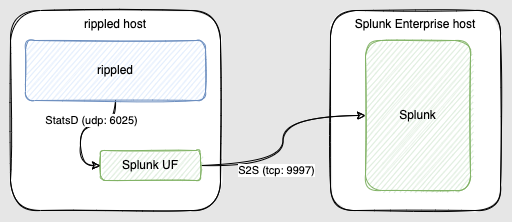

The XRP Ledger (XRPL) is a decentralized, public blockchain and rippled server software (rippled in future references) powers the blockchain. rippled follows the peer-to-peer network, processes transactions, and maintains some ledger history.

rippled is capable of sending its telemetry data using StatsD protocol to 3rd party systems like Splunk.

Continue reading How to collect StatsD metrics from rippled server using SplunkPlotting Splunk with the same metric and dimension names shows NULL

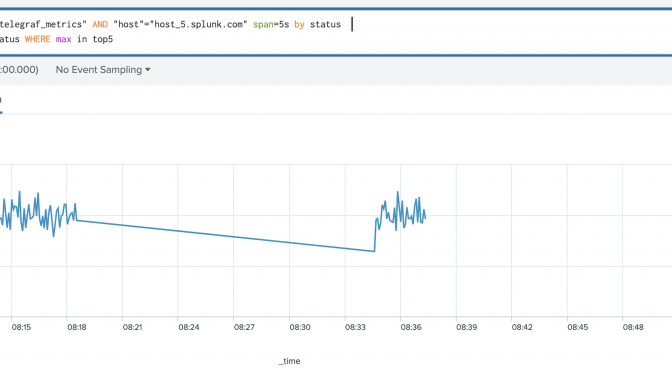

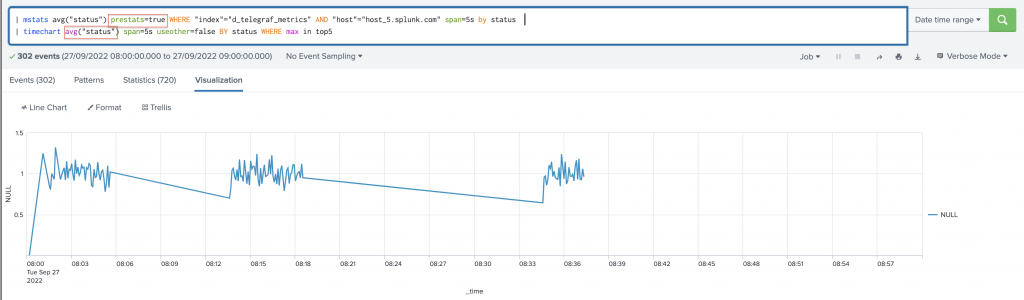

When you try plotting on a graph Splunk metric split by a dimension with the same name (as the metric itself) will show NULL instead of the dimension.

How to use an SSH key stored in Azure Key Vault while building Azure Linux VMs using Terraform

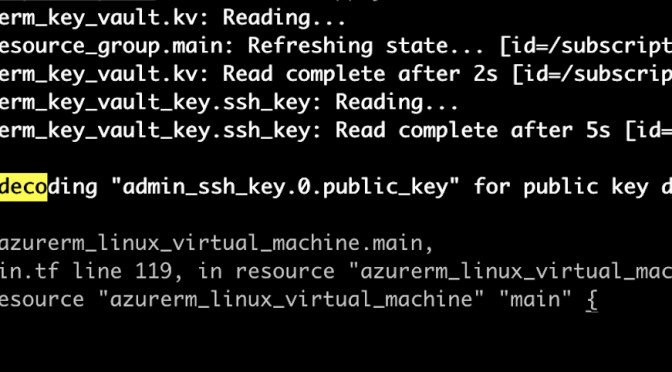

So I want to use the same SSH Public key to be able to authenticate across multiple Linux VMs that I’m building in Azure in Terraform. While I did find a lot of examples (including among Terraform example repo) of how to do it if you have the key stored on your local machine I couldn’t find (or didn’t search long enough) how to use an SSH key stored in Azure Key Vault while building Azure Linux VMs using Terraform.

Continue reading How to use an SSH key stored in Azure Key Vault while building Azure Linux VMs using TerraformHow to customise Breville Barista PRO Expresso Shot Size

If you ever wished to customise Breville Barista Pro expresso shot size, but didn’t know how, you’ve come to the right place.

Continue reading How to customise Breville Barista PRO Expresso Shot Size